电话: +86-755-26032463 邮编: 518055

中国深圳西丽深圳大学城哈工大校区L栋

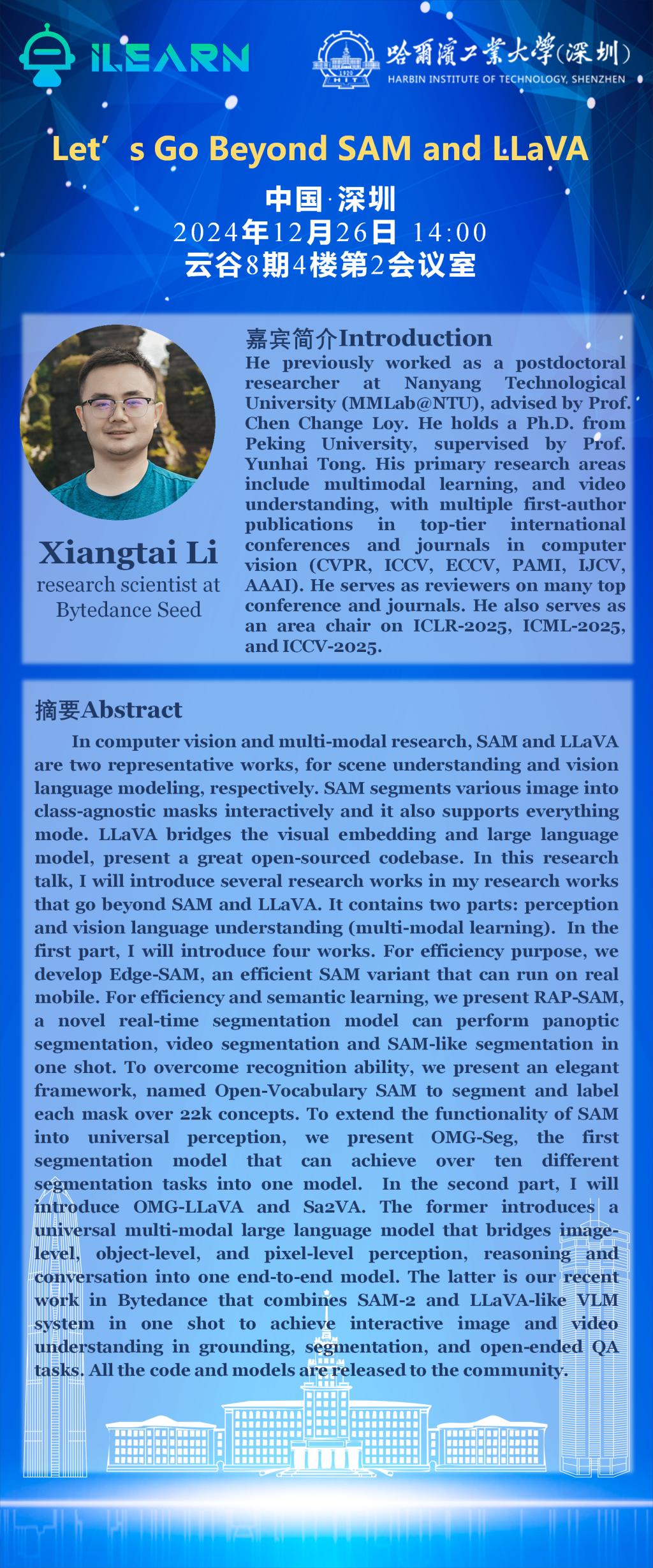

演讲人Speaker:Xiangtai Li

题目Title: Let's Go Beyond SAM and LLaVA

时间Date:2024年12月 26日 Time:上午 10:00 ~ 12:00

地点Venue: 云谷8期4楼第2会议室

内容摘要Abstract:

In computer vision and multi-modal research, SAM and LLaVA are two representative works for scene understanding and vision language modeling, respectively. SAM segments various image into class-agnostic masks interactively and it also supports everything mode. LLaVA bridges the visual embedding and large language model, present a great open-sourced codebase. In this research talk, I will introduce several research works in my research works that go beyond SAM and LLaVA. It contains two parts: perception and vision language understanding (multi-modal learning). In the first part, I will introduce four works. For efficiency purpose, we develop Edge-SAM, an efficient SAM variant that can run on real mobile. For efficiency and semantic learning, we present RAP-SAM, a novel real-time segmentation model can perform panoptic segmentation, video segmentation and SAM-like segmentation in one shot. To overcome recognition ability, we present an elegant framework, named Open-Vocabulary SAM to segment and label each mask over 22k concepts. To extend the functionality of SAM into universal perception, we present OMG-Seg, the first segmentation model that can achieve over ten different segmentation tasks into one model. In the second part, I will introduce OMG-LLaVA and Sa2VA. The former introduces a universal multi-modal large language model that bridges image-level, object-level, and pixel-level perception, reasoning and conversation into one end-to-end model. The latter is our recent work in Bytedance that combines SAM-2 and LLaVA-like VLM system in one shot to achieve interactive image and video understanding in grounding, segmentation, and open-ended QA tasks. All the code and models are released to the community.

个人简介(About the speaker):

He previously worked as a postdoctoral researcher at Nanyang Technological University (MMLab@NTU), advised by Prof. Chen Change Loy. He holds a Pli.D. from Peking University, supervised by Prof. Yunhai Tong. His primary research areas include multimodal learning, and video understanding, with multiple first-author publications in top-tier international conferences and journals in computer vision (CVPR, ICCV, ECCV, PAMI, IJCV, AAAI). He serves as reviewers on many top conference and journals. He also serves as an area chair on ICLR-2025, ICML-2025, and ICCV-2025.